Cloud Email Security: Shared Responsibility Model

News headlines are full of various data breaches, and millions of records being leaked in various places. We’re talking about user accounts, passwords, other ways that people get into systems and exploit the information available.

This is why security is a very important topic for all of us; the moment we deal with the use of data, the security of large-scale systems becomes a very big concern to everyone.

This post looks at how to share responsibility between multiple providers, and how to securely leverage the cloud to add to your advantage. (We will often refer to AWS, because we know their systems well, but most concepts apply equally across all major cloud providers.)

But first, let’s address the perennial question…

Is cloud computing secure?

We agree with the former White House CIO, Vivek Kundra, who said that cloud computing is often far more secure than traditional computing because Google and Amazon can attract and retain cybersecurity personnel of a higher quality than many [companies] and governmental agencies.

These highly focussed personnel are akin to professional athletes in big sports teams, who practice five days a week and then play important matches on the weekends. They start proficient, but their amount of specialised practice means that they’re constantly iterating and getting better at what they do. When game day comes, the amateurs who show up only on weekends for a bit of exercise are no match to the professionals who regularly practice game day scenarios Monday to Friday.

In a security context, the clickbait news headlines might make you think that cloud computing is not secure, but the big cloud providers (such as AWS, Azure, Alibaba, Google Cloud, and IBM Cloud) thwart attempted security attacks every day. They are much, much better prepared for it than most companies that run their systems on-premises, and we don’t think there a lot of companies that have spare terabits per second of bandwidth available in their datacentre, let alone some to waste on cyberattacks.

In short, the big cloud providers are experts at stopping bad actors, and we’d much rather have them on the field with us than a hobbyist who doesn’t practice – and tries to assume every field position himself.

Cloud email security: Whose role is it?

According to a recent Oracle and KPMG Cloud Threat Report, “only eight percent of cyber leaders fully understand their team’s role in securing [the] cloud versus the [role of their] cloud service provider”.

That’s scary.

As more and more telcos are transitioning their applications to the cloud, it’s important for more and more technical teams to be seeking clarity around who is responsible for what, when it comes to sharing responsibility for cloud email security.

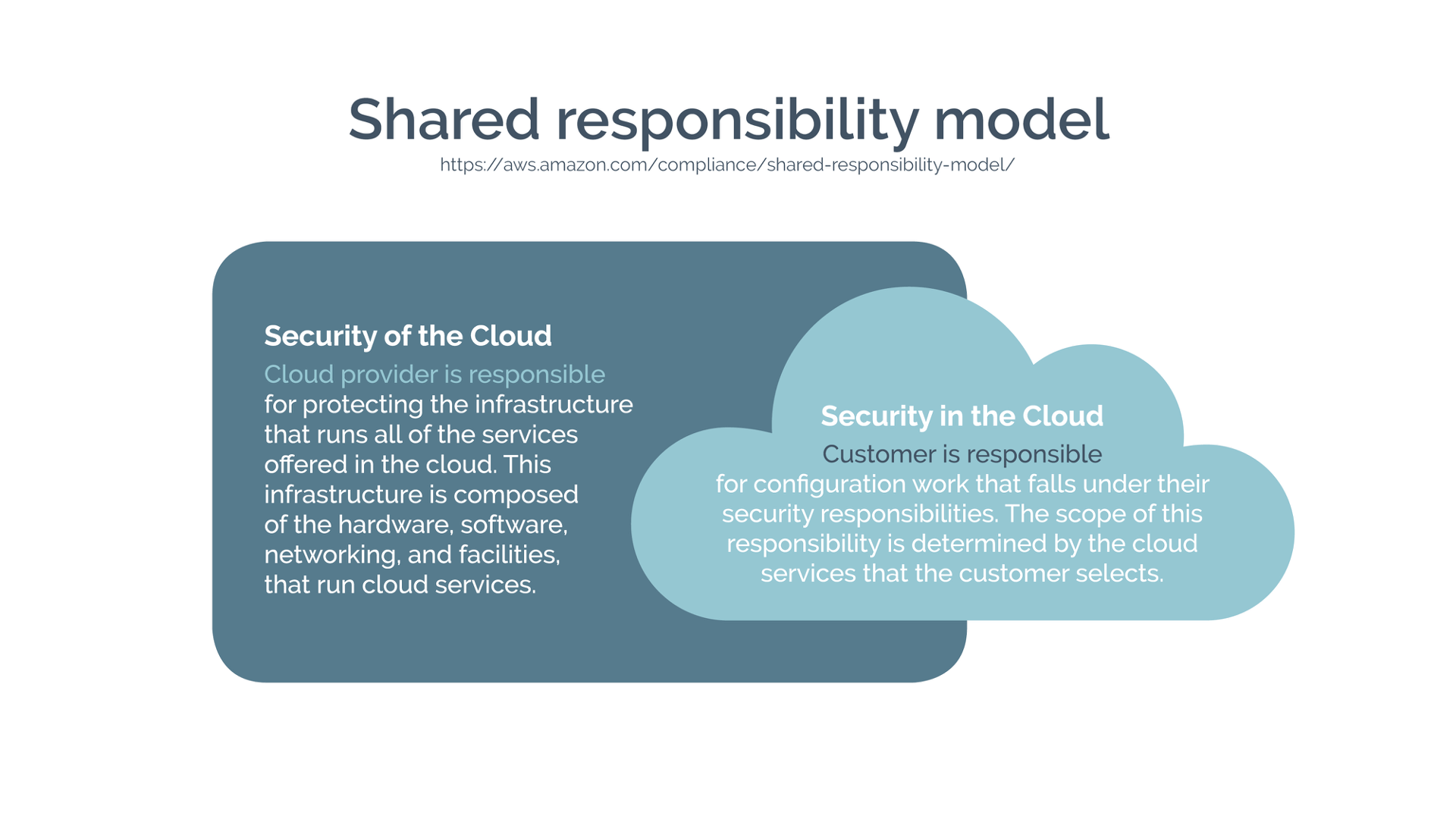

Shared responsibility model

When we talk about security, one of the important things to remember is that we need to have a holistic view of security, because security is only as strong as your weakest point. Usually, the weak points fall into the grey areas of shared responsibility – where you assume someone else is responsible for something, and they assume that you are.

AWS articulate shared responsibility well, by defining it as:

- Security of the cloud; and

- Security in the cloud.

So, what do we mean by security of the cloud? It means the infrastructure, the way it runs, the facilities, hardware, software, networking, and the like. The cloud provider actually takes the payload that we put in there as a kind of black box, more or less, and just makes sure it runs safely and securely. And they provide us a lot of tools to then secure our own way of running that.

As consumers of the cloud service provider infrastructure, we then need to be responsible for securing what we put in there. And that means the configuration that falls under our responsibility, making sure that we do things such as least privilege access patterns, and have, say, firewall rules in place. There is no point in the cloud provider having very safe infrastructure if everybody can get to it.

Pizza as a service

In order to talk this through in a bit more detail, we’d like to give a concrete example of how an ‘as a service’ is set up. Rather than dissecting a random application, let’s talk about something that most of us like: pizza.

Say that you want to run a pizza-as-a-service. In the good old world of homemade pizza, you are basically responsible for everything – from the utilities to the conversation. Now, you may think this is perfectly fine and you can deal with everything by yourself. But, think back to the last dinner party that you organised with friends, and you’ll probably remember that you were so busy working in the kitchen that you couldn’t really pay attention to your friends or their conversation. This is not the best outcome and it serves as a reminder of the difficulty in doing everything solo – and still doing it well.

For example, if you’re making woodfired pizza, what happens if your oven cracks? Are you going to fix it yourself with fire bricks and refractory cement? Or are you going to call someone who is specialised in that? And the same could be said of utilities. What if the electricity goes down? Are you going to fix it yourself, bring your own source of power, or call a specialist? And for your woodfired oven, do you have a fire extinguisher and fire blanket handy? Do you even know how to use them?

To summarise, if you are in the business of making pizza, you might want to make the pizza yourself and deal with all of the hiccups without any assistance. But if you are in the party business and pizza is only one of the services that you provide, you might want to seek assistance for some of your pizza making and focus instead on the party aspect of your business.

Back in the context of cloud email security, the more you move to the right in the pizza diagram, the more managed your service is, and the smaller your security footprint in your area of responsibility.

If you decide not to do all of the tasks yourself, you can leverage some of the more high level services, such as container as a service (or managed Kubernetes instances), platform as a service, or even some of the managed services like a managed database or all the way up to function as a service (AWS Lambda), where you only concentrate on the actual function, which is purely your business value.

But it doesn’t necessarily apply to all your workload. In the cloud adoption framework, you have certain approaches that you can mix and match. You may move one workload to the cloud but keep another on-premises. As per the AWS 6 R’s model, you can rehost (aka “lift and shift”), refactor, or even repurchase. Repurchase means that instead of trying to migrate that workload yourself, you buy a product off the shelf, and this is what a software as a service solution will provide you. You can just concentrate on the conversation.

So, in the case of email as a service, this is exactly what atmail provides: all you have to do is make sure that the configuration is right, that the look and feel of the webmail is the same as the rest of your digital assets. So, it reduces the number of things you have to take care of.

Likewise, behind the scenes, we are a company specialising in email, and that’s where our value add is. So, we don’t try to do the heavy lifting for every other aspect (e.g. caching, log aggregation, cloud infrastructure) ourselves – only the email part.

The same should apply in your business. Focus on where your value add is, and don’t try to do the heavy lifting of solutions which are not part of your core business expertise.

Email security: leveraging the cloud

This is where the cloud really helps as well, because it is fully leveraging economies of scale of absolutely humongous data centres full of servers. And cloud providers can afford to pay a lot for bandwidth. When they created their infrastructure, they had to make sure that they could maintain their vast amounts of physical resources, so they did a really thorough job of making them configurable through APIs.

As it turns out, the tools that they developed are available to you (or your cloud email provider) as consumers of their cloud services. When you move to the cloud, you benefit from these really mature sets of tools that you can leverage for your advantage. So, now you can easily control your identity and access management. You can get all your logs stored in one place. You can have a trail of all the API calls. You can also have an API to capture network traffic if you want to.

From a security context, it’s incredibly valuable to be able to leverage pre-existing tools rather than needing to build your own. And because everything is an API, you can now describe your infrastructure as code, and describe the intent rather than the method to get there, and then have an engine execute on that and bring up your infrastructure. And that means that you can have that in your continuous integration, deployment and compliance pipelines.

You can check, for instance, that you’re not opening port twenty-two on the Internet before deploying a workload. And this is a big trend in the industry. You’ve probably seen those words DevSecOps everywhere you go, you can add to that CI/CD pipeline, things like checking for credentials in your source code, making sure that your workload is secure, making sure that you will pass your compliance checks, which could also be things like CIS hardening. And it can go all the way up to running fuzzing on your code base or checking for dependencies which have known security issues.

So, if you (or your cloud email provider) are using these pre-existing cloud tool sets, you’ve effectively just got a boost to your cloud email team, in terms of insights and tooling for the exact amount of time needed. And those actually evolve quite rapidly.

Cloud is often associated with dynamic scaling and compute resources. When planning capacity for a workload, this traditionally requires estimating a peak workload and planning for that (including some safety margin). In cloud, most people deploy what is required to run the workload and scale up and down as needed. In email, the workload typically has peaks Monday to Friday around 9-10 am, lunch time, and at 4-5pm.

Whilst the dynamic scaling is well understood for compute resources, it can also be applied for a range of services provided by the cloud providers, or even third parties. As previously outlined, there are some very advanced security services out there. Running them 24/7 across your workload may be prohibitively expensive, however switching them on for a few days or weeks to get to the bottom of a very tricky issue is easily done and doesn’t cost a lot. Again, something that wouldn’t be feasible in an on-premises environment, where implementing new features needs to be thoroughly thought through, planned, and then executed. In the cloud, the providers and third parties are busy developing new tools and services for customers around the world; you can benefit from them as and when you need.

Cloud providers do the heavy lifting

In 2006, when Amazon started Amazon Web Services (AWS), Jeff Bezos said in an interview, “There’s a lot of undifferentiated heavy lifting that stands between your idea and that success”. You could spend your time investing on integrating the APIs of your switches and servers. You could spend time having a CI/CD pipeline to deploy your services, servers, patches, whatever. But does it make sense from a business perspective? Probably not.

If you try to understand what’s happening with your cloud security at the moment, you can still use some event tracing or management software such as Splunk, but you could also aggregate all those sources of the event that you get from CloudWatch and the like, and now get them processed through artificial intelligence assessment Guard Duty, which does exactly that. They will be able to learn, establish the baseline, and then let you know when there’s something that gets out of that baseline.

Only a couple of months ago, this was merely a dream for the security folk out there. And now this is just a service. You can turn it on with a click – it’s pretty amazing.

In terms of innovating, this is also easier in a cloud context, because if you want to launch and build a new service, you don’t have to wait a long period of time to provision the service. You can quickly launch a small, elastic, set of resources that will be running the service. You can expose it to the customers without putting your security posture in danger, because this is going to be a separate platform. And then if that service takes off, it’s perfect, you can just provision more resources, and respond to the demand. And again, that would be a separate service from what provides the core of the business, so there’s no issue here. You’ve reduced your blast radius to a minimum. And if that service does not find its market, which can happen if you’re innovating, you can turn it off, reduce the infrastructure and resources affected by it, and move on to your next innovation.

Recap

We believe you cannot secure on-premises environments to the same level as a cloud equivalent at the same cost. But it’s critical that you understand the shared responsibility model and know what you are on the hook for. Cloud providers might provide the infrastructure, tools and some of the services certified with pretty much all the security frameworks out there, but you still have to take care of the things that are within your responsibility. So, for instance if you’re using virtual machines, the operating system needs to be secured in a certain way (for instance, if you’re doing PCI-DSS). Your practices, or those of your cloud email provider, also need to be compliant. And because you have all of these things, it’s easier to talk to auditors and show them that you have this traceability (who, what, when, how) all throughout your workload.

To determine your organisation’s level of responsibility, it’s important to decide what type of service your business needs: Do you want to operate a DIY woodfired pizzeria, and be responsible for the oven, fire and lights? Or do you want to operate a party service, where you order in the best pizza in town, while you focus on the entertainment of the guests? You then need to adapt your threat model to what you’re doing in the cloud and design your cloud email security accordingly.

Written by Gildas Le Nadan and Dirk Marski

New to atmail?

atmail is an email solutions company with 22 years of global, email expertise. You can trust us to deliver an email hosting platform that is secure, stable and scalable. We power more than 170 million mailboxes worldwide and offer modern, white-labelled, cloud hosted email with your choice of US or (GDPR compliant) EU data centres. We also offer on-premises webmail and/or mail server options. To find out more, we invite you to enquire here.